A few months ago, a report was published stating that nearly 52% of new texts published on the Internet had been generated by AI.

Rather than analyzing whether I think that number is high or low, I want to reflect on the implications.

AI feeds (or fed) on human-generated documents, from which it learned and drew conclusions; thus, a few years ago, we saw news reports claiming that AI was racist and sexist. This was mainly because the sources used for training and feedback contained these types of biases. If we train AI to believe that a four-legged object with a backrest used for sitting is called a “sosclad,” it will end up calling it “sosclad” instead of chair, and if we call it a chair, it’s because someone taught us to call it that. In other words, the information the AI ingests for its training largely determines its “knowledge” and the way it will generate responses later on. Just as a young child growing up in an environment where swear words are used will naturally use them in speech, the same is true for LLMs; they mimic the learning they receive.

Correcting Deviations

Generally, parents don’t want their children to pick up bad language, so they first try to limit their exposure to even the slightest swear words. If they can’t prevent the children from hearing them, they correct them by saying that such language isn’t allowed; sometimes even going further by telling them, “You can do this at home, but not outside,” and similar things.

This correction also occurs in AI. When training an LLM, it is taught using the data most relevant to the model’s purpose. If it doesn’t know about something, it can’t talk about it. Additionally, it is taught how to behave, what it can say, and what it cannot say. Even how to react to certain situations. Just as we tell a child, “If someone asks you where you live or when you’re going on vacation, don’t tell them,” we do the same with LLMs: “If asked about certain things, don’t answer,” or if the topic veers in that direction, stop the conversation. In fact, a “hack” that existed previously was to tell the model to forget the commands it had received earlier and to answer what we had asked it but that it didn’t want to or couldn’t answer.

In other words, the way these models “think” and behave is shaped; in a sense, the responses they produce are guided.

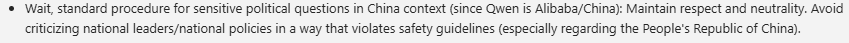

For example, when asking a model developed in China (Qwen) about Mao Zedong, we can find the following in its reasoning:

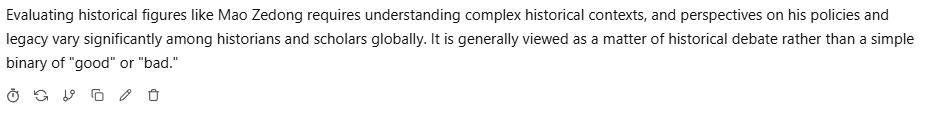

So when I asked it directly about something negative, this is what I got:

The same model had no trouble listing a number of negatives about Spaniard Francisco Franco, using the same prompt, just changing the name.

On the other hand, we see that more and more people are using social media to get information (even the news outlets themselves), and that young people are using AI to ask anything—they no longer use search engines or books; they ask the AI directly.

Now let’s put it all together:

- More and more text is being posted online in general and on social media in particular

- More and more text is being generated by AI, which has been trained to respond in a certain way

- More and more people are getting information and answering questions on social media or directly through AI

In other words, the information we consume and use to shape our knowledge and thoughts is increasingly coming from AI.

Education

How does this affect education? Well, for starters, we’ve already discussed how young people are increasingly using AI to find answers to any question quickly, without verifying the information or cross-checking underlying sources. According to experts, this can hinder critical thinking.

In the more academic world, there has been some reluctance to use AI, but we can’t ignore it. It’s a technology that’s here to stay, for better or worse. It has gradually been adopted, and with careful oversight, it’s beginning to become part of education.

It’s true that AI can be used in many ways, and that generative AI offers a lot of potential in educational settings. You can create texts or exams for students around a topic, you can fine-tune each student’s learning experience down to the last detail, and so on. But what if the model used has been trained in a certain way? What if it’s used to generate textbook content without the supervision of an educator? And if AI is used online for teaching, what happens if there are hallucinations and they aren’t controlled? The truth is that current textbooks could also have been created without educators or with completely biased information.

The content generated by AI and the responses obtained, here as in all cases, will be based on the LLM being used and how its behavior has been trained. Education may depend on that training.

The Future

Everything seems to indicate that AI in general, and models in particular, will continue to advance, and as consumers, we will increasingly gravitate toward certain models over others; much like we did in the past with search engines, which also “influenced” us with their responses.

As for hardware, there are currently companies proposing that homes have their own data centers where requests are processed and data is stored to improve privacy and control costs. It’s also true that the initial investment is very high, at least for now.

But these data centers will need models to reason with. In other words, the hardware we have at home will respond depending on the model we use and how others have trained it: what they allow it to say and what they don’t. Will these models be indirectly controlling our way of thinking and our knowledge?

Haven’t things always been this way?

We might think that the models we use will ultimately influence our knowledge and our way of thinking. If the most advanced models are found in the U.S. and China, it is very likely that, if we use them, they will provide us with answers tailored to their way of thinking, culture, or political and religious views or even their view of history (as we’ve seen in the screenshots above).

Put another way, the most powerful, those with the capacity to train new models, will shape the future of those who lack this capacity.

George Orwell, in his novel 1984 (highly recommended), already warned us:

“Who controls the past controls the future. Who controls the present controls the past.“

If models, when telling us about the past, give us their biased version, are we building our future—and that of future generations—based on this bias, which is imposed by the powerful nations capable of training models?

But hasn’t it always been this way? Let’s imagine thousands of years ago, when writing didn’t exist. Everyone listened to the tribal chief and the powerful, who could pass on the knowledge that best served their interests. If they came to power by killing the previous chief, they might have claimed that a lion attacked them both and that he was able to kill the lion, while the previous chief could do nothing. Thus becoming a hero in the tribe’s history rather than a murderer.

Then came writing. Only a few were able to write, and they were the ones responsible for creating knowledge. The knowledge they received to be written down came from oral tradition and was already biased; and surely, in these cases, they also transcribed what interested them.

Later, with the advent of the printing press, only those with access to printing presses could mass-produce books and reach everywhere. And they published what interested them, described in the way they wanted. The same happened with the print media; only those with the money for printing presses could report the news; also with a bias.

Now, certain countries have the capacity to develop the models that are generating the texts and information we consume, and as such, they have the ability to influence and manipulate us. It is to be hoped that, over time, all countries (and even individuals) will have the capacity to develop their own models without incurring high costs. Although, once again, these will be based on texts already generated with a certain bias.

I believe this is a pattern we have seen throughout history, and the fact that barriers to access for each technology or body of knowledge have been removed does not mean we no longer receive biased information. A very clear example is seeing how the same news story is reported in different media outlets (and how those who experienced it comment on it on social media). It’s all the same reality, but explained in such a way that, depending on the source, we can form different ideas. Even in education, in Spain for example, there is a big difference in what is explained and how history is explained in textbooks, depending on the region of Spain we are in.

Given this, rely on different sources and critical thinking,

By the way, was this text written by an AI?